Understanding new regulations, risks, and tooling in a rapidly-evolving AI regulation landscape

You’ve probably heard the old joke: “What happens when you put 10 economists in a room? You’ll get 11 opinions.”

You could easily apply the same quip to the world of artificial intelligence. We can all agree that AI is fundamental to the enterprise world. However, it’s hard to find a consensus understanding of how AI systems work and where they’re headed next.

AI innovation is moving so quickly that even the people responsible for implementing and monitoring AI systems in their organizations — CISOs, Chief Data Officers, Heads of AI, etc — find it difficult to understand the impact these technologies are having on their enterprise.

That’s why the recent vote from the European Parliament is so important. On June 14, the parliament passed a draft law of the EU AI Act, which will require technical documentation and registration of “high-risk” AI systems being used within the EU.

It’s a watershed moment for the world of AI, providing a set of focused guard rails, frameworks and stiff penalties to regulate the use of artificial intelligence. This legislation is poised to become the global standard for AI compliance, just as the EU’s General Data Protection Regulation (GDPR) has evolved into the leading data protection framework.

The EU AI Act should play a vital role in injecting some form of standardization into how we evaluate the security and trustworthiness of AI models. Regulators are racing to reduce the risk of the fast-moving AI boom, and company leaders will be moving equally fast to make sure they stay compliant.

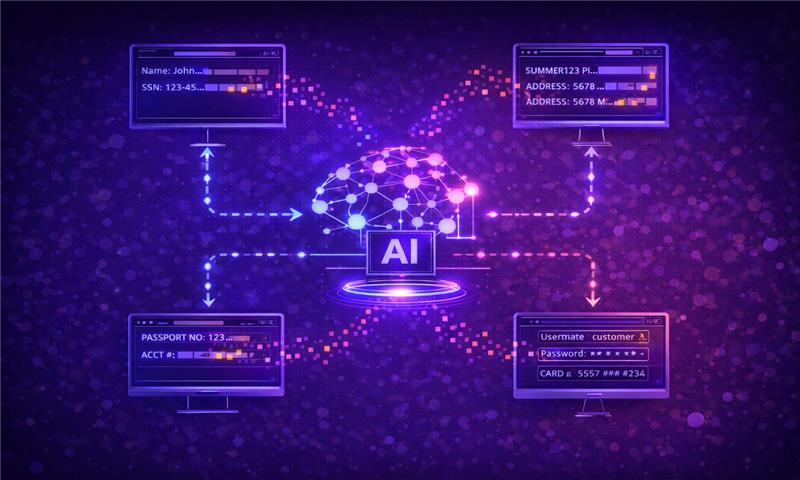

Enterprises need ways to gain visibility into how their AI systems work. They need oversight of how their systems are staying secure from adversarial threats, preserving the security of valuable data sets and interacting with other AI infrastructure. This is why we built Cranium: to help enterprises ensure their AI systems are secure, compliant and trustworthy so that the AI revolution can continue to bring helpful innovations to the world.

Let’s explore the impact of this week’s EU announcement, and how Cranium’s newly-launched AI card can help enterprises navigate the regulatory environment.

The EU AI Act, GDPR and the changing regulatory landscape

With the draft law passed, but not yet put into action, the EU AI Act has already gained lots of attention. That’s because regulators are fearful of AI’s rapid pace of innovation. Enterprises, meanwhile, are wary of potentially significant fines. Companies have already incurred significant penalties from GDPR violations, with Meta recently facing the largest sum of $1.2 billion euros. Infringing on the EU AI Act promises similar ramifications.

It’s easy to draw parallels between the rise of DevOps and the rise of AI. Software development really started to boom about 25 years ago. For the first 10 years of this movement, cybersecurity wasn’t even a consideration. It took a while before businesses started suffering from malware and data breaches.

Once that started happening, the cybersecurity industry took off. I was smack in the middle of this rise as the CEO of Prevalent, which specializes in third-party risk management. GDPR came along to reduce some of the data privacy risks that had been exposed.

But that’s where the parallels stop. CI/CD pipelines were very distinct and built to produce the same output. AI models, on the other hand, are trained to learn and adapt. It’s much more difficult to manage risks when the software itself is constantly evolving.

The EU has done a great job, even in its initial drafts, to emphasize that this framework must constantly evolve to keep pace. This trickles down to enterprise leaders, who in turn must stay ahead of regulatory changes to avoid penalties.

Strategies for increasing alignment around AI risk

Enterprise leaders must focus on awareness, education, and collaboration to set their organizations up for success. It starts from the top. I’ve spoken with data science and AI leaders that weren’t even familiar with the NIST or EU AI Act frameworks. How could the rest of the organization know what’s coming if these experts don’t?

It’s such a new field, and it’s moving so fast. Organizations can’t be expected to immediately know exactly how to respond to new frameworks.

There’s truly so much education that’s needed. The best way to catch up is to start right away. Cybersecurity and compliance leaders need to loop data science experts into their discussions. Open communication will help increase alignment.

Additionally, enterprises must educate themselves on the tools that help data science teams apply these frameworks to the AI systems that the organization is using.

How the Cranium AI card helps organizations address regulatory and compliance concerns

Integrating enterprise or generative AI into your enterprise is a complicated process. There’s so much going on, and so many stakeholders involved. Most implementations include:

- Using a hyper scaler, such as Azure ML, AWS Sagemaker or Google VertexAI

- Integrating third-party tool kits

- Working with a service provider to develop models

- Pulling in open-source models such as Hugging Face

- Using internal and external data sets

There is risk and uncertainty within each element of this AI supply chain. Yet at this point, cybersecurity and data security teams are lacking visibility. This is where Cranium’s AI Card can help.

The AI Card allows organizations to easily gather and share information about the trustworthiness and compliance of their AI models with clients and regulators, and gain visibility into the security of their vendors’ AI systems. Enterprise leaders can use the AI card to gain a better understanding of the systems they’re creating and the stakeholders involved in the process.

AI is fundamentally changing the way that business works. That’s poised to continue, as long as we have visibility into how these systems operate. If you’re looking to learn what’s behind your AI, chat with the Cranium team to learn more.